Handball Computer Vision POC

Background

Ever since I considered studying Applied AI, T’ve wanted to combine my passion for handball with AI.

I envisioned some kind of AI-driven video analysis tool which analysis game tactics and creates statistics completely autonomous.

For a long time, it was just an idea floating around in my head.

That changed when I saw this Linkedin post by Piotr Skalski from Roboflow. He did player detection and tracking for basketball clips and I said: “I want that for handball!”

First steps with object detection

So I got to research about this topic and looked at his implementation. Piotr used a fine-tuned RF-DETR (roboflow-detection transformer). Yes a transformer - that thing known from LLMs - for object detection. The problem was that the model was fine-tuned on basketball videos, which differ enough from handball that it barely detected any players. Then the path was clear: I needed to finetune the model myself for handball-specific videos. Luckily I found a relatively decent dataset on Roboflow Universe, which I could use for fine tuning. My first finetune was done using Google Colab and the RF-DETR Nano model. The performance was somewhat useable, but many players just were not detected and there were many false positives.

Second finetune of object detection model

I then figured, using the larger variant of the model, RF-DETR Small would improve performance, which it does, but I also noticed that the dataset was not really perfect. I manually reviewed and improved the dataset’s annotations by correcting mislabeled players, adding missing annotations, and including more diverse scenes. These actions greatly improved the performance of the second fine tune of the model (this time I also fine tuned it directly in Roboflow Universe).

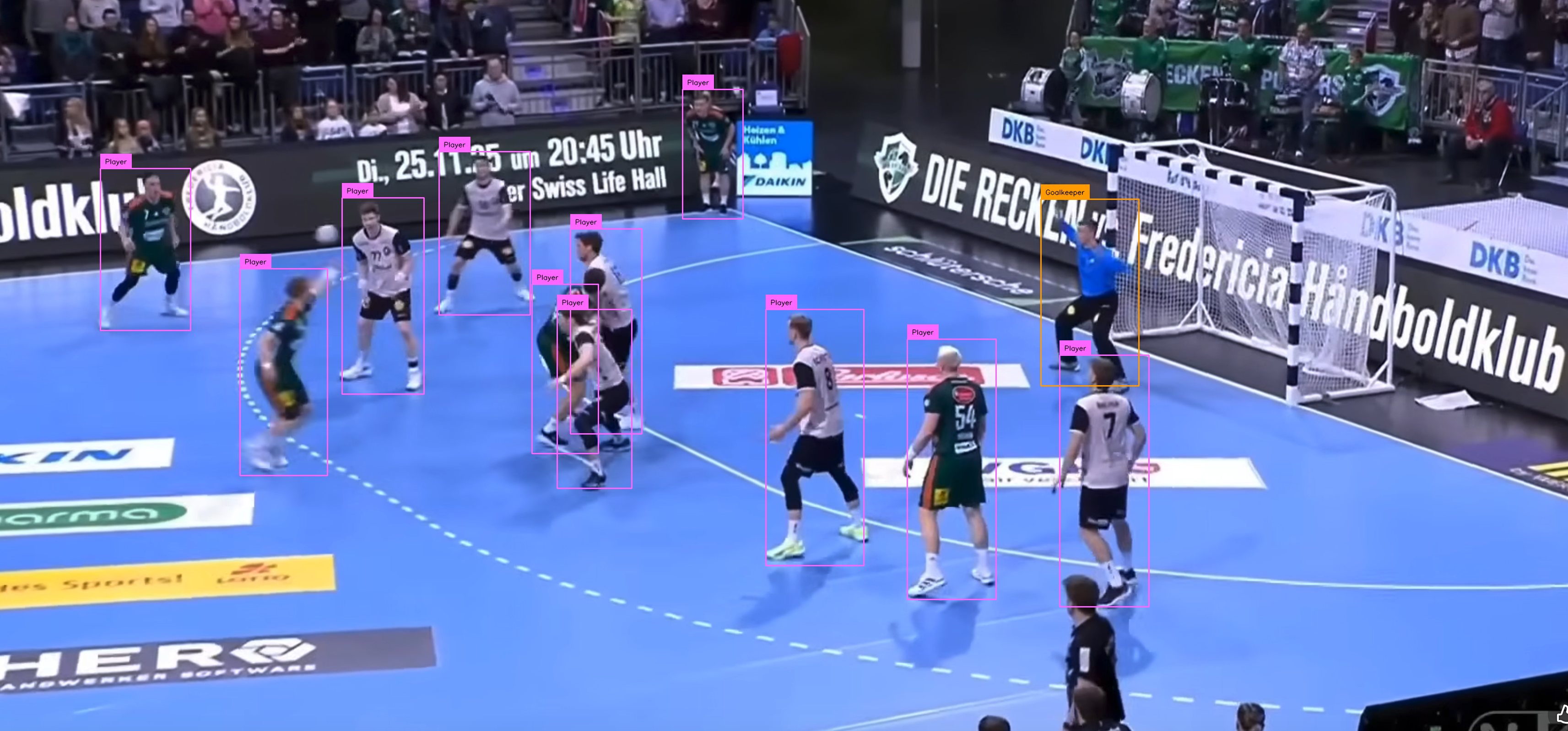

Then when running the RF-DETR on an entire video clip it looks like this:

Player tracking

At this point I was adopting roboflows Basketball AI: How to Detect Track and Identify Basketball Players notebook for my finetuned model and handball use cases.

Then next step was to use the first frame of the object detection to prompt SAM2 for image segmentation.

Using SAM2 for segmentation based on the first frame’s detections, we get this output:

The tracking isn’t perfect yet: the camera operator is mistakenly tracked, and players are sometimes lost when they re-enter the frame or during occlusions.

Future Work

While the current POC demonstrates the core functionality, there’s plenty of room for improvement and expansion:

Improve Detection & Tracking Robustness

- Data: Collect more handball-specific footage (e.g., from different leagues or camera angles) to reduce false positives (referees/bystanders) and improve recall for occluded players.

- Post-processing: Add heuristics (e.g., player size/position filters) to reject non-player detections.

Team Classification

- Use SigLIP to cluster players by team based on jersey colors/logos.

- Implement a simple UI to manually assign team names (e.g., “Home” vs. “Away”) for semi-automated labeling.

Jersey Number Recognition

- Extending RF-DETR to also detect jersey numbers.

- Fine-tune SmolVLM2 for OCR on cropped jersey regions.

- Validate numbers across frames to reduce misreads (e.g., “6” vs. “8”).

Court Mapping & Analytics

- Detect court keypoints (e.g., goalposts, center line) to map player positions to tactical diagrams.

- Generate stats like player heatmaps, possession chains, or defensive formations.

Dataset Expansion

- Explore synthetic data generation using the newly released SAM3 and text prompts.

Goal: A fully autonomous system that not only detects and tracks players but also provides actionable insights for tactical analysis.